UDL Tip of the Month 2026

Cutting Through the Noise to Build Better Choice Rubrics

Cutting Through the Noise to Build Better Choice Rubrics

By Marc Thompson (CITL)

One of the most common challenges instructors encounter when offering Multiple Means of Action and Expression is not coming up with assignment options, it's designing a single rubric that works well across all of them (Thompson, 2024). When students can choose between an essay, a presentation, a podcast, or another format, instructors often struggle to identify criteria that are equally relevant to every assignment option. In situations like this, rubrics can sometimes drift toward format-specific features, or instructors cave and create separate rubrics for each assignment option. Either way, consistency tends to suffer.

This is where thinking in terms of signal-to-noise ratio can be especially useful. Signal-to-noise thinking can help instructors identify which criteria should remain constant across formats because they reflect the learning outcomes, and which criteria are better treated as flexible, contextual, or secondary.

Signal, Noise, and Rubric Design

In educational assessment, the "construct" is the specific knowledge, skill, or capability an assessment is intended to measure (Messick, 1989). That construct is the "signal." A well-designed rubric makes that signal visible and stable across student work. The difficulty is that student submissions often include additional demands that are not central to the construct: time constraints, tool fluency, familiarity with genre conventions, or comfort with specific modes of communication. These factors can introduce "noise," not because they are unimportant in general, but because they aren't always relevant to what the assignment is meant to assess.

When instructors design rubrics for multiple submission options, this noise can become especially pronounced. Criteria that work well for one format may feel awkward, irrelevant, or overly restrictive for another. Signal-to-noise thinking provides a way through that problem. It starts by identifying the signal, then designing rubric criteria that capture that signal in ways that apply across formats (CAST, 2018; Meyer et al., 2014). A "signal-first choice rubric" does exactly that. It anchors evaluation to the learning outcomes and uses the same core criteria for each of the assignment options, while at the same time allowing the form of students' action and expression to vary.

The following checklist offers some strategic questions to ask when designing a signal-first choice rubric:

Design Checklist for Signal-first Choice Rubric

- Name the signal: What specific knowledge, skill, or capability is this assignment designed to measure?

- Anchor criteria to outcomes: Do all rubric criteria clearly align with the stated learning outcomes?

- Test for cross-format relevance: Would each criterion still make sense if the student chose a different approved assignment format?

- Identify potential noise: Are timing, tool proficiency, genre fluency, or production polish influencing the score without directly supporting the learning outcome?

- Be intentional about conventions: If disciplinary conventions matter and must be measured, are they explicitly named and tied to the construct?

- Check for equity and clarity: Can students easily see what counts as evidence of learning, regardless of how they choose to express it?

If each of these checklist items can be answered confidently, the rubric is likely doing a good job of keeping the signal clear while allowing meaningful choice across a variety of assignment options.

Examples of Signal-First Choice Rubrics Across Disciplines

The following assignment examples illustrate how consistent, relevant rubric criteria can be applied across different submission formats without privileging one assignment option over another.

Example 1: History Assignment

Assignment Instructions

Students will develop an interpretive analysis of a historical question using primary sources. The submission must advance a defensible historical claim and support that claim through contextualized analysis of multiple primary sources. Students may choose one of the approved formats below. All formats will be evaluated using the same criteria.

Approved formats:

- An 8–10 page analytical essay

- A 12–15 minute narrated digital exhibit

- A structured podcast episode with an annotated source record

Signal-First Choice Rubric (History)

| Criterion | Exemplary | Proficient | Developing |

|---|---|---|---|

| Interpretive Claim | Advances a clear, original historical interpretation that drives the analysis | Presents a coherent interpretation with minor lapses | Relies largely on description or an implicit claim |

| Use of Primary Sources | Analyzes sources for perspective, purpose, and limitation | Uses sources appropriately with uneven analysis | Treats sources primarily as factual records |

| Contextualization | Situates sources convincingly within broader historical contexts | Provides relevant context with limited integration | Context is minimal or disconnected |

| Synthesis | Connects sources to each other in service of the argument | Some connections are made | Sources remain largely isolated |

| Scholarly Practice | Uses attribution and citation conventions appropriate to the medium | Minor inconsistencies in scholarly practice | Significant problems with attribution or framing |

In this rubric, scholarly practice is included because transparent use of evidence is part of the historical construct itself. Criteria remain relevant whether the work is written, narrated, or exhibited digitally.

Example 2: Biology Assignment

Assignment Instructions

Students will interpret experimental results from a provided dataset and explain what the data suggest about an underlying biological process. The submission must connect claims directly to evidence and demonstrate understanding of the experimental design. Students may choose one of the formats below.

Approved formats:

- A written Results and Discussion section

- A recorded research briefing with annotated figures

- An interactive lab report using R Markdown or Jupyter Notebook

Signal-First Choice Rubric (Biology)

| Criterion | Exemplary | Proficient | Developing |

|---|---|---|---|

| Data Interpretation | Accurately explains trends, variability, and anomalies | Identifies major patterns with minor errors | Misinterprets data or describes without analysis |

| Evidence-based Reasoning | Claims are explicitly tied to figures or statistics | Evidence is present but unevenly integrated | Claims are weakly supported |

| Experimental Design Awareness | Clearly explains controls, variables, and limitations | General awareness of design features | Limited or incorrect understanding |

| Biological Explanation | Uses appropriate biological mechanisms and models | Explanations are plausible but underdeveloped | Explanations are vague or inaccurate |

| Research Communication | Follows core conventions for presenting scientific results | Conventions mostly followed | Conventions interfere with interpretation |

Here, communication conventions are assessed because they support accurate interpretation of data. The same criteria apply across all formats, even though the presentation of results may differ substantially.

Example 3: Sociology Assignment

Assignment Instructions

Students will apply one or more sociological theories to analyze a contemporary social issue or case. The submission must demonstrate accurate theoretical application and sustained sociological reasoning. Students may select from the formats below.

Approved formats:

- An analytic paper

- A policy memo written for a defined stakeholder audience

- A recorded case analysis with a written analytic outline

Signal-First Choice Rubric (Sociology)

| Criterion | Exemplary | Proficient | Developing |

|---|---|---|---|

| Theoretical Application | Applies theory insightfully to explain social dynamics | Applies theory correctly with limited nuance | Theory is referenced but poorly applied |

| Analytical Depth | Examines causes, structures, and implications | Analysis present but uneven | Largely descriptive |

| Use of Evidence | Integrates course readings or empirical sources effectively | Uses relevant evidence | Evidence is minimal or disconnected |

| Argument Coherence | Develops a sustained sociological argument | Argument mostly coherent | Reasoning fragmented |

| Genre Awareness | Adapts tone and structure effectively to audience and purpose | Some genre awareness | Genre interferes with clarity |

In this example, genre awareness is assessed because sociological analysis often circulates in professional and public contexts. The criteria remain focused on sociological reasoning rather than format-specific polish.

Why Signal-to-Noise Matters for Choice Rubrics

Designing a rubric that works across multiple assignment options is difficult because formats vary. Signal-to-noise thinking offers a way to manage that complexity. By first identifying the construct and treating that as the stable signal, instructors can develop consistent, relevant criteria that apply across the different assignment options students are allowed to choose from. The result is a choice rubric that supports UDL's Multiple Means of Action and Expression while at the same time maintaining rigor, fairness, and alignment with stated learning outcomes. Ultimately, when the signal is clear and the noise is intentionally managed, both students and instructors have a better shared understanding of what counts as evidence of learning.

References

- CAST. (2024). The UDL Guidelines. http://udlguidelines.cast.org

- Messick, S. (1989). Validity. In R. L. Linn (Ed.), Educational Measurement (3rd ed., pp. 13–103). Macmillan.

- Meyer, A., Rose, D. H., & Gordon, D. (2014). Universal Design for Learning: Theory and Practice. CAST.

- Thompson, M. (2024) UDL Principle 3: Multiple Means of Action and Expression. https://citl.illinois.edu/udl-tip-month-2024. (January 2024)

High Impact, Low Effort: Quick UDL Self-checks for Actionable Course Improvements

By Marc Thompson

Courses often grow in layers: new readings to fill gaps, longer assignment instructions to prevent confusion, and a seemingly never ending, expanding set of policies that accumulate to manage edge cases. Over time, these well-intentioned changes can unintentionally make learning harder by increasing cognitive load, obscuring priorities, or limiting how students engage. This month we consider some quick, targeted course self-checks that can help identify where course design creep may be creating friction.

Unlike the structured, long term reflection and revision cycles covered in last year's November UDL Tip of the Month article on instructor reflection and continuous improvement (Thompson, 2025a), UDL self-checks can help instructors evaluate existing course evidence to make quick, high-impact course adjustments. They are short, deliberate reviews that occur at strategic junctures like after a major assignment, mid-semester, or during course preparation. By seeking answers to practical questions like "Where is the course design creating friction that makes it harder than it needs to be right now?" UDL self-checks provide actionable insights without the time commitment of a full reflection cycle. At the same time, it's good to keep in mind that while self-checks are aimed at quick improvements, they can also contribute to broader, long term reflection cycles by helping instructors notice patterns now that they can target for more extensive redesign plans later (Iris Center, 2026).

It’s also important to distinguish UDL self-checks from accessibility checks, though the two can overlap in some ways. Accessibility reviews ensure technical requirements are met for optimizing access to digital content (e.g., heading structure, captioning, color contrast, screen-reader compatibility, etc.), while UDL self-checks focus more on inclusive practices that provide greater instructional clarity, flexibility, and engagement. In this context, a course may meet accessibility standards yet still present barriers if assignment choices are rigid or students don’t understand the purpose of various course activities. Think of UDL self-checks as quick diagnostic scans of learning experiences that complement accessibility reviews, rather than supplanting them. For more on this symbiotic relationship, see "UDL vs. Accessibility: What's the Difference and How Do They Work Together?" (Thompson 2025b).

Three Passes Through Your Course

A good catalyst for jump starting a UDL Self-check is to ask yourself "What part of my course currently requires the most explanation, and what design adjustment could reduce that overhead?" These questions help focus attention on areas most likely to create barriers for students. Once you have a general sense of where to look, you can work your way through a course scan in three targeted passes with each pass zeroing in on a different UDL instructional pathway.

Pass 1: Representation: Can Students Access and Interpret Key Ideas?

Self-check questions:

- Are key ideas available in multiple forms?

Example: Students struggling to follow a dense reading can also access a narrated slide summary or infographic. - Do complex materials include orientation or interpretive support?

Example: A dense history article is accompanied by a timeline, annotated glossary, and discussion guide. - Could students who miss class still grasp core ideas?

Example: Lecture recordings, short video summaries, and annotated lecture slides help students to catch up.

Where and how to look:

- LMS analytics: Are students quickly leaving dense reading pages?

- Discussion boards: Are posts focused on confusion about instructions rather than ideas?

- Email patterns: Are repeated clarification questions common?

- Assignment results: Do students miss questions tied to specific resources?

- Student reflections or surveys: Do they indicate misunderstanding of key concepts?

Examples of strategies to reduce representation barriers:

- Mathematics: Pair symbolic proofs with narrated explanations or step-by-step guided videos.

- Biochemistry: Combine pathway diagrams with short, written process summaries.

- History: Add guiding questions, contextual maps, and annotated primary sources.

- Sociology: Provide both theoretical diagrams and applied case study examples.

- Economics: Offer narrative walkthroughs of equations alongside symbolic formulations.

- Education: Present lesson examples alongside theoretical concepts, including short exemplars of classroom implementation.

- Computer Science: Provide code snippets with explanatory comments, plus flowcharts showing algorithm steps.

Pass 2: Action & Expression: Can Students Show Learning Without Format Barriers?

Self-check questions:

- Are all assessments in the same mode?

Example: Every unit uses timed essays, even when some questions could be answered via infographic, oral explanation, or interactive simulation. - Are instructions clear enough to avoid confusion?

Example: Students lose points for missing implied components like “cite at least two peer-reviewed sources.” - Could executive-function demands overshadow content goals?

Example: A multi-stage project requires students to plan topic, select format, and schedule milestones simultaneously, without guidance or scaffolding.

Where and how to look:

- Assignment submissions: Are multiple students misinterpreting the same instructions?

- Rubric comments: Are repeated clarifications needed across submissions?

- Choice patterns: Are certain options consistently avoided (e.g., no students choose the video option)?

- Office hours or discussion posts: Are logistical questions overshadowing conceptual questions?

- Peer review feedback: Are students struggling with mechanics instead of content?

Examples of strategies to reduce barriers to action & expression:

- Political Science: Allow analysis via briefing memo or short paper, with shared grading criteria.

- English Literature: Provide options for a close-reading, an annotated text, or a comparative analysis.

- Biology: Accept written explanations or narrated slide presentations with visuals.

- Engineering: Pair problem sets with short written reflections explaining design choices.

- Education: Allow critique of a lesson plan as a written template or annotated rubric.

Pass 3: Engagement: Can Students Sustain Effort and See Value?

Self-check questions:

- Where do students commonly disengage or fall behind?

- Do students understand why activities matter?

- Are pacing and effort expectations clear? Are students underestimating the time needed for assignments?

Where and how to look:

- Gradebook trends: Identify drop-offs in submissions.

- Late or missing work: Look for clustering around specific assignments or weeks.

- Discussion participation: Are there patterns of declining activity mid-term?

- Assignment descriptions: Is the task’s purpose explicitly stated?

- Student reflections, journal entries, or surveys: Do they reveal confusion about relevance or effort?

Examples of strategies to boost engagement:

- Statistics: Provide low-stakes practice sets or quick quizzes to reinforce learning.

- Chemistry: Break lab reports into staged submissions with feedback at each step.

- History: Ask students to relate themes to current events or local examples.

- Computer Science: Share exemplar solutions and provide guided walkthroughs of challenging assignments.

- Public Health: Connect assignments to authentic professional scenarios or case studies.

- Business: Include reflection prompts linking project work to career goals or personal interests.

- Education: Offer small, meaningful peer review or group tasks to sustain motivation.

Prioritizing Your "Plus-One" Change

UDL doesn't typically involve a total course overhaul. It’s more about sustainable, incremental growth. Following the Plus-One approach (Tobin & Behling, 2018), the goal with UDL self-checks is to identify one barrier and provide one alternative. After completing your three-pass scan, look for the high-friction areas where students struggle most. You might choose one priority adjustment from the examples below, or use them as a springboard to identify an adjustment that targets a specific barrier that surfaced during your UDL self-check:

Possible Strategies for Addressing Design Friction:

- Clarify Purpose: Rewrite assignment instructions to foreground the "why" (purpose) and the "how" (success criteria).

- Contextualize Content: Add brief “Why this matters” notes to challenging readings or videos.

- Model Success: Provide an exemplar or template for complex projects.

- Early Detection: Introduce a low-stakes checkpoint to surface misunderstandings before they become grades.

- Streamline Navigation: Simplify the student experience by consolidating or restructuring cluttered course pages.

- Check for Alignment: Ensure your rubric language explicitly matches your learning outcomes.

- Manage Cognitive Load: Adjust pacing or redistribute effort across heavy-workload weeks.

- Flexible Participation: Offer an alternate discussion mode (e.g., audio, video, or structured text prompts).

- Provide Scaffolding: Add optional tools like visual organizers, a glossary, key words, guiding questions, or calculation aids.

Putting it All Together

By taking time to perform targeted UDL self-checks, instructors can locate friction points that might otherwise go unnoticed, and make small, actionable improvements that enhance clarity, flexibility, and engagement. The focus is on practical, high-impact adjustments rather than wholesale redesign. Over time, these quick scans can help courses evolve incrementally, fostering a learning environment that supports the success of all students.

References

- Burgstahler, S. E. (2015). Universal Design in Higher Education: From Principles to Practice (2nd ed.). Harvard Education Press.

- CAST. (2018). Universal Design for Learning Guidelines version 2.2. http://udlguidelines.cast.org

- IRIS Center. (2026). Designing with UDL. https://iris.peabody.vanderbilt.edu/module/udl/cresource/q2/p08/#content

- Thompson, M. (2025a). UDL and Instructor Reflection: Designing for Continuous Improvement. Center for Innovation in Teaching & Learning.

- Thompson, M. (2025b). UDL vs. Accessibility: What's the Difference and How Do They Work Together?. Center for Innovation in Teaching and Learning.

- Tobin, T. J., & Behling, K. (2018). Reach everyone, teach everyone: Universal design for learning in higher education. West Virginia University Press.

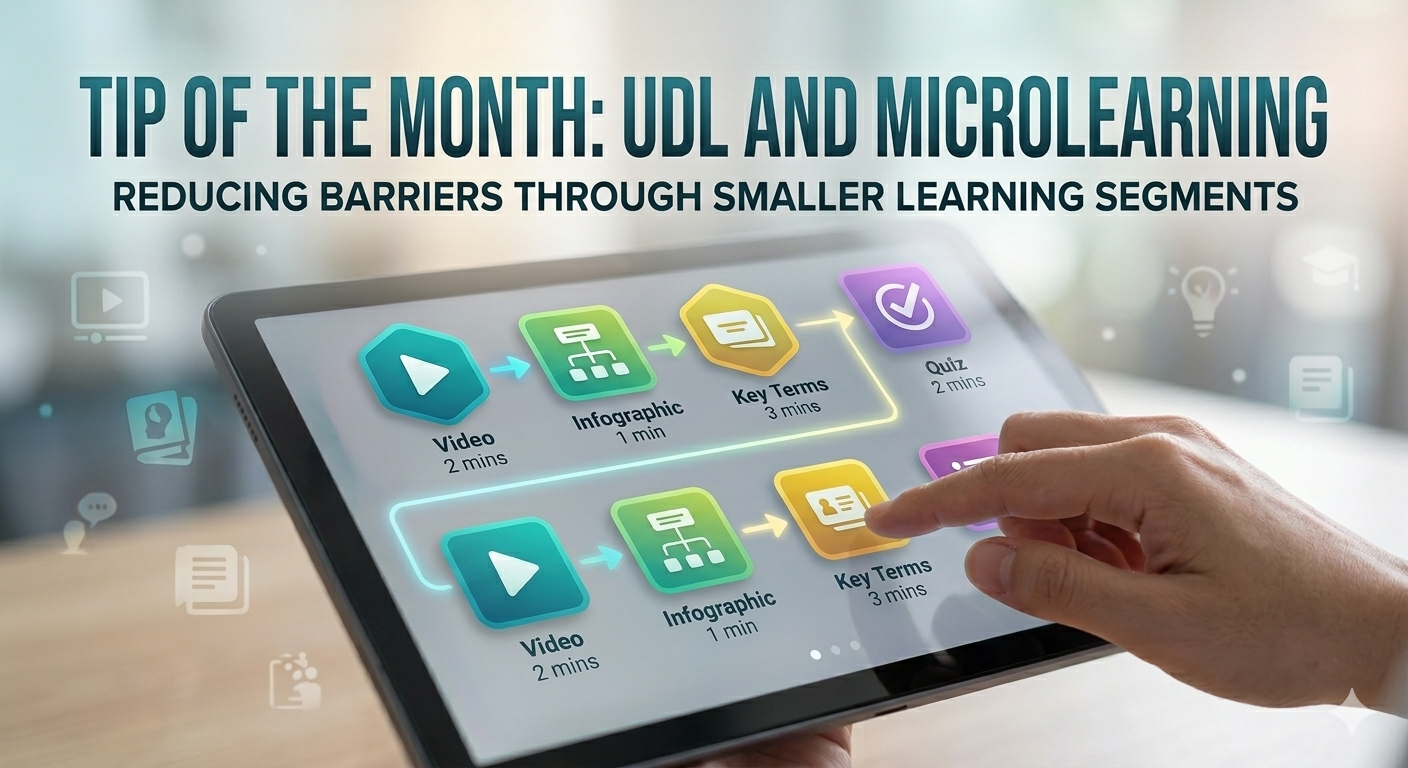

UDL and Microlearning: Small Changes, Big Impact

By Marc Thompson

Reducing Barriers through Segmented Content

Short, focused microlearning segments can significantly improve engagement and retention when designed intentionally. When viewed through a UDL lens, microlearning is more than shorter videos or chunking content; it’s about reducing cognitive load, increasing flexibility, and offering multiple ways for learners to access and engage with content.

For effective microlearning, consider breaking content into 5- to 10-minute segments, each tied to a single concept and paired with a brief activity. Research on multimedia learning and Mayer’s “segmenting principle,” suggest that learners process information more effectively when it is segmented and focused, rather than presented in long, continuous streams (Mayer, 2021). From a UDL perspective, this principle supports perception and comprehension (CAST, 2024c) by helping learners manage cognitive load and revisit key ideas as needed (CAST, 2024b).

Aligning Objectives with Action and Expression

Effective microlearning also requires tight alignment and strategic application. For optimal effectiveness, each segment should connect to a learning objective and include an opportunity to apply or check understanding. This is where microlearning can support multiple means of Action & Expression. Learners aren’t just consuming content but actively using it and building on it in multiple low-stakes ways (CAST, 2024a). Without alignment and application, microlearning can become fragmented and less effective.

Building Momentum Toward Broader Goals

Microlearning and application can be bundled into modules or units that allow learners to see individual pieces and how they connect to broader goals. This design approach aligns with the “executive functions” of the UDL framework’s strategic network by helping learners understand, plan, and monitor progress (CAST, 2024d). Following are some discipline-specific examples of how microlearning activities can be structured and sequenced to achieve broader learning outcomes or skills.

UDL-focused Microlearning Sequence Examples

Biochemistry (Metabolic Pathways)

Microlearning Strategies: This sequence moves from visualizing a complex system to identifying its parts, and finally to analyzing how those parts interact. By mastering the building blocks first (Mayer, 2021), students are better prepared to tackle the "big picture" logic of the system.

- Segment 1: Overview video + labeled diagram

- Segment 2: Interactive labeling of pathway steps

- Segment 3: Prediction question on regulation points

Political Science (Case-Based Learning)

Microlearning Strategies: Encourages relevance and transfer (Ambrose et al., 2010). This learning progression moves from the acquisition of a foundational concept (federalism) to its contextualization through a real-world example (short case study), culminating in an active application task (2- to 3-question application prompt) that facilitates the transfer of knowledge to new and diverse scenarios.

- Micro-lesson on a foundational concept (e.g., federalism)

- Short case example (contextualization)

- 2–3 question application prompt

Composition (Writing Skills)

Microlearning Strategies: Just-in-time support aligned with assignment needs. This progression shifts from understanding a writing rule to practicing it in a low-stakes way, before applying that knowledge to help a peer.

- Thesis development mini-lesson + rewrite task

- Source integration example + quick practice

- Citation check activity

Engineering (Problem Solving)

Microlearning Strategies: Reinforces mastery through incremental steps. This sequence guides students from observing an expert’s process, to deconstructing the steps in an example, and finally to independent execution.

- Concept explanation: 5-minute breakdown of the forces in a free body diagram

- Worked example: step-by-step how to calculate support

- Short practice with immediate feedback

Nursing (Clinical Skills)

Microlearning Strategies: Supports self-regulation and applied learning. This progression moves from modeling a professional standard to internalizing the steps through a checklist.

- Short demonstration video

- Skills checklist

- Scenario-based self-assessment

Common Microlearning Pitfalls (and How to Fix Them)

Pitfall 1: Chunking Without Purpose

Breaking content into smaller pieces without clear objectives can lead to fragmentation.

Fix: Ensure each segment has a single learning goal + aligned activity (Mayer, 2021).

Pitfall 2: Overloading with Too Many Micro-Options

Too many videos, tools, or choices can overwhelm learners.

Fix: Curate 2–3 high-value resources per concept and clearly label what is essential vs. optional

Pitfall 3: Loss of Big-Picture Coherence

Learners may struggle to see how pieces fit together.

Fix: Use module overviews, concept maps, or weekly summaries to help learners connect segments

Pitfall 4: Passive Consumption

Short videos alone do not improve learning.

Fix: Pair each segment with an active element that involves retrieval, application, or reflection.

Pitfall 5: Assuming Microlearning Works Everywhere

Not all content benefits from microlearning.

Fix: Use microlearning selectively (see below).

When Microlearning May Not Be the Best Approach

Microlearning is powerful but not universal. It may not be the best approach for:

- Deep discussions or storytelling-based lectures can benefit from longer formats where ideas build over time.

- Capstone-level integration may require fewer, longer sessions rather than many small ones.

- Complex synthesis tasks often require extended, uninterrupted engagement.

The Bottom Line

Ultimately, shifting toward microlearning is about building a kind of cognitive momentum. By breaking complex threshold concepts into modular, intentionally sequenced units that scaffold more sophisticated synthesis, we can help learners stay in the "flow" of the learning experience rather than getting overwhelmed by the sheer volume of a single sitting.

This structured approach also allows learners to master the essential building blocks of a field before being asked to apply them to those broader, integrative skills we ultimately value. If you’ve read this far, why not try taking just one "heavy lift" topic in your course and deconstructing it into a few purposeful, linked segments. You might just find that providing that extra bit of breathing room makes the climb toward those big, complex learning goals feel more attainable for everyone!

References

- Ambrose, S. A., Bridges, M. W., DiPietro, M., Lovett, M. C., & Norman, M. K. (2010). How learning works: Seven research-based principles for smart teaching. Jossey-Bass.

- CAST. (2024a). Build fluencies with graduated support for practice and performance. Universal Design for Learning Guidelines version 3.0.

- CAST. (2024b). Cultivate multiple ways of knowing and making meaning. Universal Design for Learning Guidelines version 3.0.

- CAST. (2024c). Design options for perception. Universal Design for Learning Guidelines version 3.0.

- CAST. (2024d). Enhance capacity for monitoring progress. Universal Design for Learning Guidelines version 3.0.

- Mayer, R. E. (2021). Multimedia learning (3rd ed.). Cambridge University Press.